|

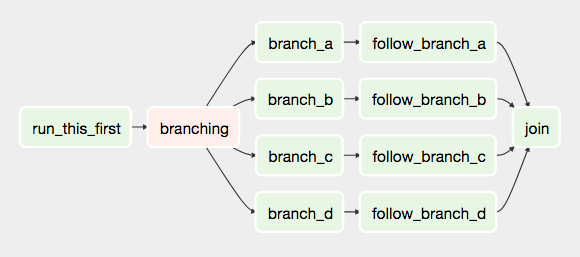

The following are the core concepts in Airflow DAGs:

The DAG file specifies the order in which tasks should be executed and their dependencies, allowing for efficient scheduling and monitoring of data pipelines in Airflow. It is a Python script that defines and organizes tasks in a workflow. Source: Airflow DAG Documentation What is a DAG file in Airflow?Ī DAG file in Airflow stands for Directed Acyclic Graph file. Airflow DAGs provide a powerful and flexible way to manage complex workflows, making it easier to monitor and troubleshoot data pipelines and enabling organizations to process large amounts of data with ease. One of the most common Apache Airflow example DAGs can be ETL (Extract, Transform, Load) pipelines, where data is extracted from one source, transformed into a different format, and loaded into a target destination.Īnother Airflow DAG example could be automating the workflow of a data science team, where tasks such as data cleaning, model training, and model deployment can be represented as individual nodes in an Airflow DAG. Each task is defined as a node in a graph, and the edges between nodes represent the dependencies between tasks. Airflow DAGs represent a set of tasks that must be executed to complete a workflow.

0 Comments

Leave a Reply. |

Details

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed